Building Safe AI: Transparency, Accountability, and the Future Impact of Generative AI

Details

| Type | Lecture |

|---|---|

| Intended for | General public |

| Date(s) | May 15, 2026 15:00 — 16:30 |

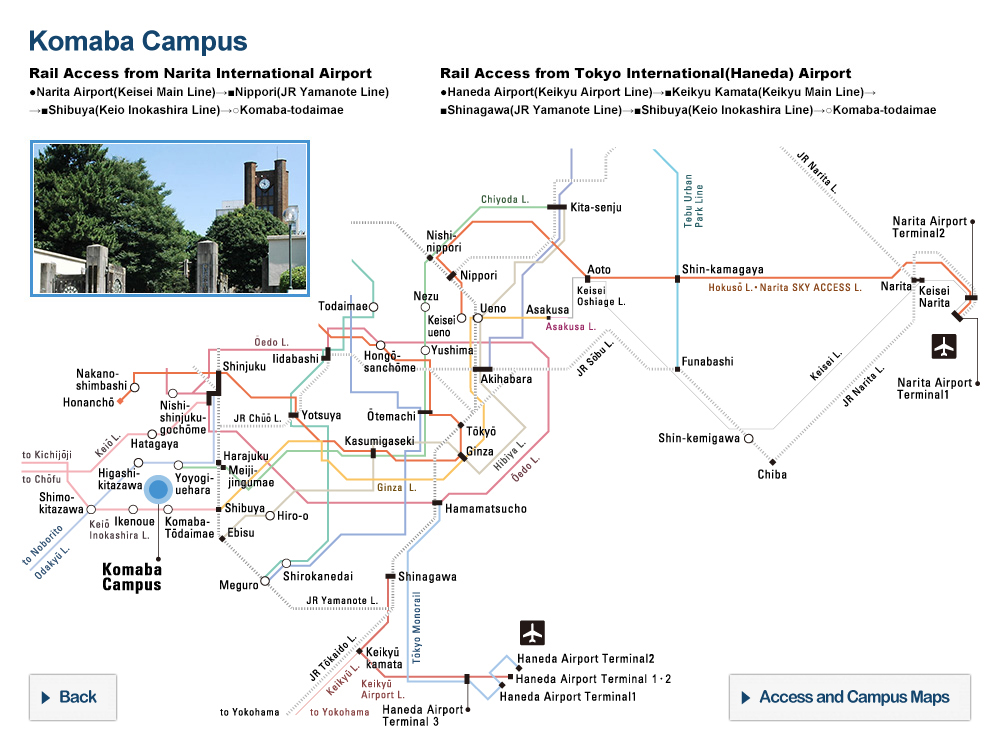

| Location | Komaba Area Campus |

| Venue | ENEOS Hall, Building #3-S, Komaba II Campus, Research Center for Advanced Science and Technology, The University of Tokyo |

| Capacity | 172 people |

| Entrance Fee | No charge |

| Registration Method | Advance registration required

Please register via Google form |

| Registration Period | May 11, 2026 — May 14, 2026 |

| Contact | [email protected] |

The Economic Security Intelligence Lab (ESIL) at the Research Center for Advanced Science and Technology (RCAST), The University of Tokyo, and the Asian Productivity Organization (APO) are honored to co-host a public symposium featuring experts from OpenAI, United Nations University, and the Matsuo-Iwasawa Lab at the University of Tokyo titled:

Building Safe AI:

Transparency, Accountability, and the Future Impact of Generative AI

As generative AI systems become increasingly embedded in business, government, education, research, and everyday life, the central challenge is no longer simply how to accelerate AI adoption, but how to build and govern AI systems that are safe, transparent, accountable, and beneficial to society. Recent developments in frontier AI, including OpenAI’s safety and intelligence reporting on the misuse of generative AI, have underscored both the transformative potential of advanced models and the emerging risks associated with influence operations, cybersecurity, critical infrastructure, and uneven institutional readiness. For Japan and the wider Indo-Pacific region, these issues are closely tied to economic security, democratic resilience, productivity, and international cooperation.

This public seminar will bring together perspectives from industry, international organizations, and academia to examine how safe and responsible AI can be advanced in practice. Dr. Tobias Peyerl, Head of Strategic Intelligence and Analysis Team at OpenAI, brings deep expertise in safeguarding advanced AI systems from misuse and emerging threats, drawing on experience in global risk assessment, threat intelligence, and cross-sector parterships, as well as prior leadership roles at Google and the United Nations. Professor Tshilidzi Marwala, Rector of United Nations University and Under-Secretary-General of the United Nations, brings a global governance and sustainable development perspective, drawing on his recent work on AI governance frameworks, the role of knowledge institutions, and the institutional conditions needed to ensure that AI serves the public good. Dr. Irene Li, Project Lecturer at the Matsuo-Iwasawa Laboratory, The University of Tokyo, brings a technical academic perspective grounded in research on trustworthy AI, multilingual large language model evaluation, medical reasoning, and inclusive AI development, with particular relevance to questions of reliability and accountability in real-world applications. The panel discussion will be moderated by Akira Igata (Project Lecturer, ESIL, RCAST, The University of Tokyo).

Held as part of the APO’s Genuine AI Action (GAIA) initiative, this seminar will situate global debates on AI safety and governance within Japan’s policy, economic, and societal context, while exploring how technical expertise, institutional accountability, and international cooperation can help societies harness the benefits of generative AI while addressing its risks.

【Welcome Remarks】

Dr. Indra Pradana Singawinata is the Secretary-General of the Asian Productivity Organization (APO). Prior to joining the APO, Dr. Singawinata was Senior Vice President at the Indonesia Infrastructure Guarantee Fund (IIGF). He holds a PhD in philosophy from Ritsumeikan Asia Pacific University (APU), Japan; a master’s degree in accounting from the University of Indonesia; and a bachelor’s degree in economics from Trisakti University, Indonesia.

【Panelists】

Dr. Tobias Peyerl is the Head of Strategic Intelligence and Analysis Team, OpenAI. He leads the Strategic Intelligence and Analysis team at OpenAI, safeguarding advanced AI systems from misuse and emerging threats. Since joining in 2024, he has driven risk assessments across global compute infrastructure, geopolitical environments, and national security, while scaling safety capabilities with external partners. Previously, Tobias led Intelligence Analysis and Trust Risk Governance at Google, directing cross-functional teams to manage platform-wide safety risks. He also served as a political affairs officer at the United Nations, contributing to the UN’s Plan of Action to Prevent Violent Extremism.

Tobias has guest lectured at UC Berkeley, USC, UCLA, and Columbia University. He holds a PhD in International Law from the Graduate Institute in Geneva and studied at Harvard Law School, Sciences Po Paris, NYU, and Humboldt University in Berlin.

Professor Tshilidzi Marwala is the Rector of United Nations University and Under-Secretary-General of the United Nations. Prof. Marwala has been a visiting scholar/professor at universities in the USA, the UK, China, and South Africa. He has extensive academic, policy, management, and international experience, and is a co-holder of five patents. His research has been multidisciplinary, involving the theory and applications of artificial intelligence to engineering, social science, economics, politics, finance, and medicine. He has served on a variety of global and national policymaking bodies, and has worked with such United Nations entities as UNESCO, UNICEF, WHO, and WIPO.

Prof. Marwala is, inter alia, a Fellow of the American Academy of Arts and Sciences, The World Academy of Sciences, the Academy of Science of South Africa, and the African Academy of Sciences.

Among the awards that Prof. Marwala has received are the Order of Mapungubwe (South Africa’s highest honour) and the Academy of South Africa’s Science-for-Society Gold Medal. He was named the 2021 IT Personality of the Year by the Institute of IT Professionals South Africa.

Dr. Irene Li is a Project Lecturer at the Matsuo–Iwasawa Laboratory, The University of Tokyo. She holds a PhD from Yale University. Her research focuses on large language models (LLMs), multilingual natural language processing, and trustworthy AI, with a particular emphasis on medical reasoning and evaluation. She has developed benchmarks and frameworks to improve the reliability and safety of LLMs in real-world applications, particularly in low-resource and multilingual settings. She was recognized as one of MIT Technology Review’s Innovators Under 35 (Japan) in 2025.

【Moderator】

Akira Igata (Project Lecturer, RCAST, The University of Tokyo)

This symposium will be conducted in English.

Notes for participants

- For security reasons, no dangerous materials or food/beverages are allowed inside the venue. Please follow staff instructions during the event.

- Please present your valid photo ID (e.g., student ID or driver’s license) at the reception desk on the day of the event. For security reasons, participants who do not provide complete and accurate information regarding their identity, affiliation, and position may not be admitted.